Medal x General Intuition: How Clips And Gameplay Actions Create Spatial Intelligence

It turns out that gaming footage is one of the richest, most complex visual datasets in the world. It’s fast-moving, unpredictable, and visually dense in ways that few other types of video can match. When combined with player behavior (“player moved forward”) and gameplay actions (“goal was scored”), it can yield deep understanding about the context and spatial awareness.

Over the last few months, we have publicly announced and given updates on our research lab, General Intuition, a Public Benefit Corporation dedicated to exploring this more deeply, but we wanted to lay out what it is, what data is being used, and how it will benefit Medal all in one place.

General Intuition’s goal is to responsibly explore foundation models for spatial intelligence and contextual understanding of environments by analyzing gameplay clips. In addition to cutting-edge research, these models will make Medal better in ways we couldn’t do before.

We're doing this in service of our players, to keep our platform free, and to build product features that wouldn’t be possible otherwise. But that data belongs to you. You own it, and you get to decide whether you want to be a part of this, or opt out at any time, which will remove all of your past data.

You can opt in or out at any time in Settings > Medal AI.

What Do We Mean By Actions And Why Are They Important

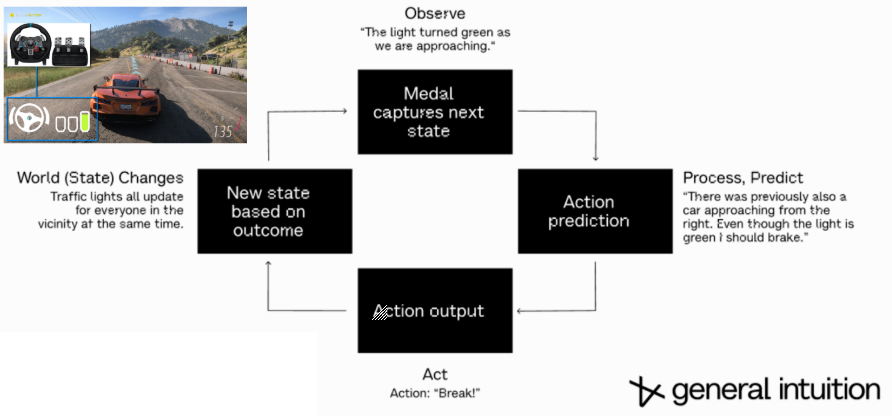

When you play a game, you're constantly making decisions. Moving through space, reacting to what's on screen, timing an ability, dodging a crash. These are split-second spatial decisions, and that's precisely the type of data you need to make driving safer.

Actions are semantic descriptions of what's happening: "Player moved forward," "Goal was scored." They're not raw key presses (we take privacy very seriously), which have no meaning on their own. Actions are the connection between what's happening on screen and what the player is doing about it.

Actions are key to building advanced contextual and spatial intelligence. This can be used for cutting-edge navigational systems but also powerful new Medal features listed below.

To Medal Users

New Features Coming Soon™ Enabled By Research

Our mission at Medal is to make it as easy as possible to share an awesome moment or memory in your game with your friends. The models we are developing will power new features to better achieve that.

- Smart trimming

We’re tired of manually trimming every clip when the real moment is clear. We’re building models to detect natural breakpoints and key moments, which will feed into smart trimming suggestions. - Accurate captions & subtitles

Manually subtitling takes way too long. Automatic captions are often wrong. ("Their Russian bee" instead of “They’re rushing B”). If we actually understand the context, we can provide more accurate captions. - Auto clip every game

By learning from the moments people actually clip, we can power auto clipping for every game on the planet (until some dev comes up with some crazy new mechanic we’ve never seen). - Auto-montage

Cut and edited to perfection by understanding the key moments. Just drop clips in and get a highlight reel back.

What Data Are We Using

These models use data generated from uploaded clips and controller overlays, including the actions (“Player moved forward”) and events (“Goal was scored”) that occur during gameplay. This type of data is necessary to understand the content of the clips and build the features listed above.

Our whole team is very privacy and security conscious, and we happily run with these features on. However, we also wanted this to be optional. You can turn this off at any time with no downsides in Settings > Medal AI.

To Game Developers

We believe AI will never replace the human creativity behind the games we love. Game development brings together art, engineering, storytelling, and community in a way nothing else does.

Our goal is to build technology that supports that ecosystem, by doing what we’ve always done, which is allowing people to share great moments from the games you know and love faster and easier than ever.

We have no interest in building games, game engines, or asset generation systems. General Intuition is focused on foundation models for spatial intelligence (agents that can make decisions within worlds, something more akin to bots for finding bugs and analyzing performance). Learn more here: https://www.youtube.com/@gen_intuition

About Medal

Medal is now used by 15M+ gamers every month, making it the largest clipping platform in the world. This year alone, Medal users will capture 2.5B+ clips, which in turn help games grow organically through TikTok, Discord, YouTube Shorts, Instagram, and Medal’s own discovery features.

About General Intuition

General Intuition is the frontier research lab dedicated to building foundation models for environments that require deep spatial and temporal reasoning. If you want to learn more, you can read about our research architecture or check out the following interviews we’ve done on the topic.

- Naavik Gaming Podcast

"Inside General Intuition's Push to Build Embodied AI Agents" - Latent Space: The AI Engineer Podcast

"World Models & General Intuition: Khosla's largest bet since LLMs & OpenAI" - RoboPapers Ep#42

"General Intuition" Deep dive into foundation models for robotics, sim2real, and why gaming clips are the unlock to spatial intelligence.

And if you're interested in building these systems with us, we're hiring: https://generalintuition.com/careers

.png)